Prithvijit Chattopadhyay

Research Scientist, NVIDIA Cosmos Lab

Ph.D., Georgia Tech (2019–2024)

advised by Judy Hoffman

★ Rising Star Doctoral Student Award

M.S., Georgia Tech (2017–2019)

advised by Devi Parikh

About

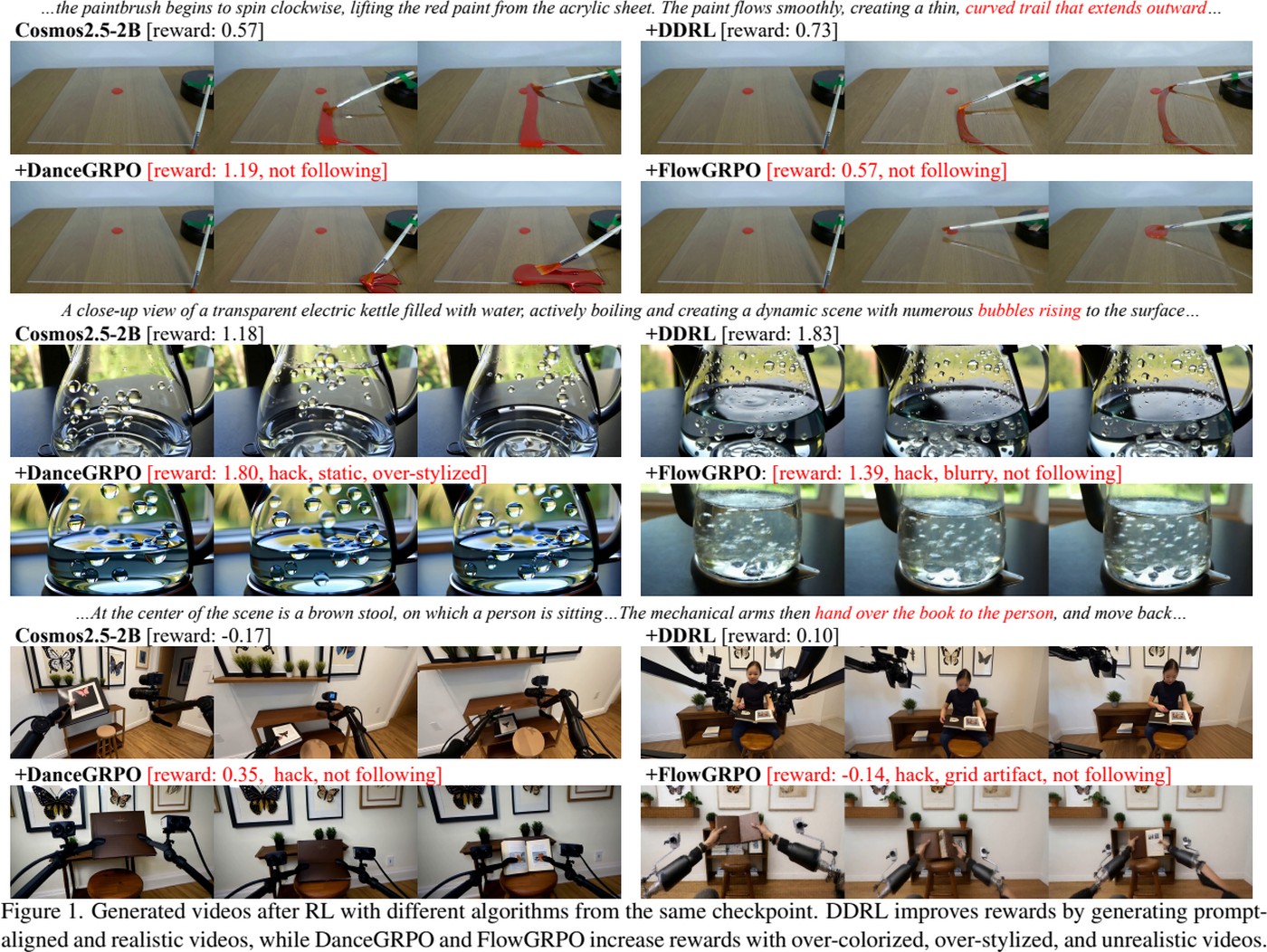

I am a Research Scientist at NVIDIA Cosmos Lab, where I work on world-foundation models: video-generation models for spatio-temporal forecasting and VLMs for understanding. My work spans model-design, pre-training, data curation and evaluation of large multi-modal foundation models.

I'm driven by challenging open-ended problems that stretch what I know. Besides the usual research grind, my latest obsessions are.

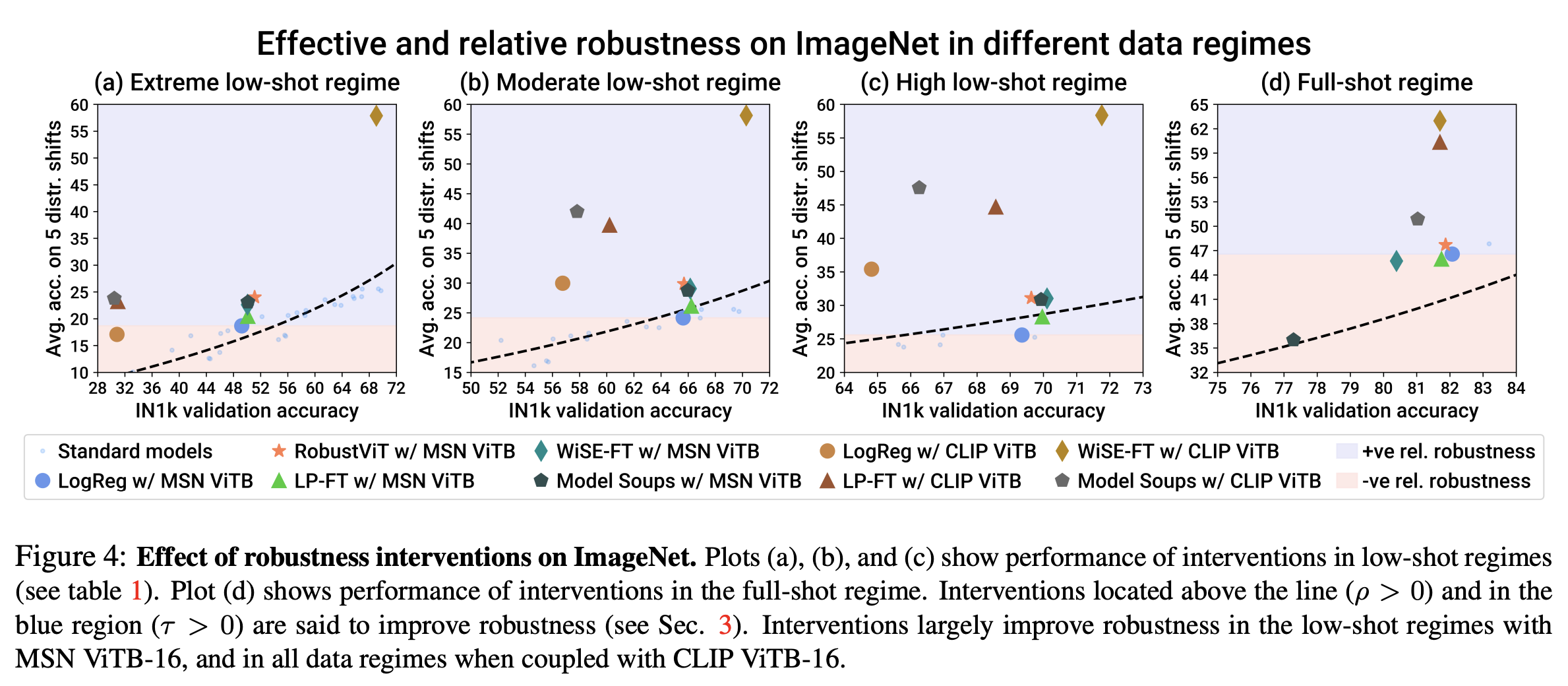

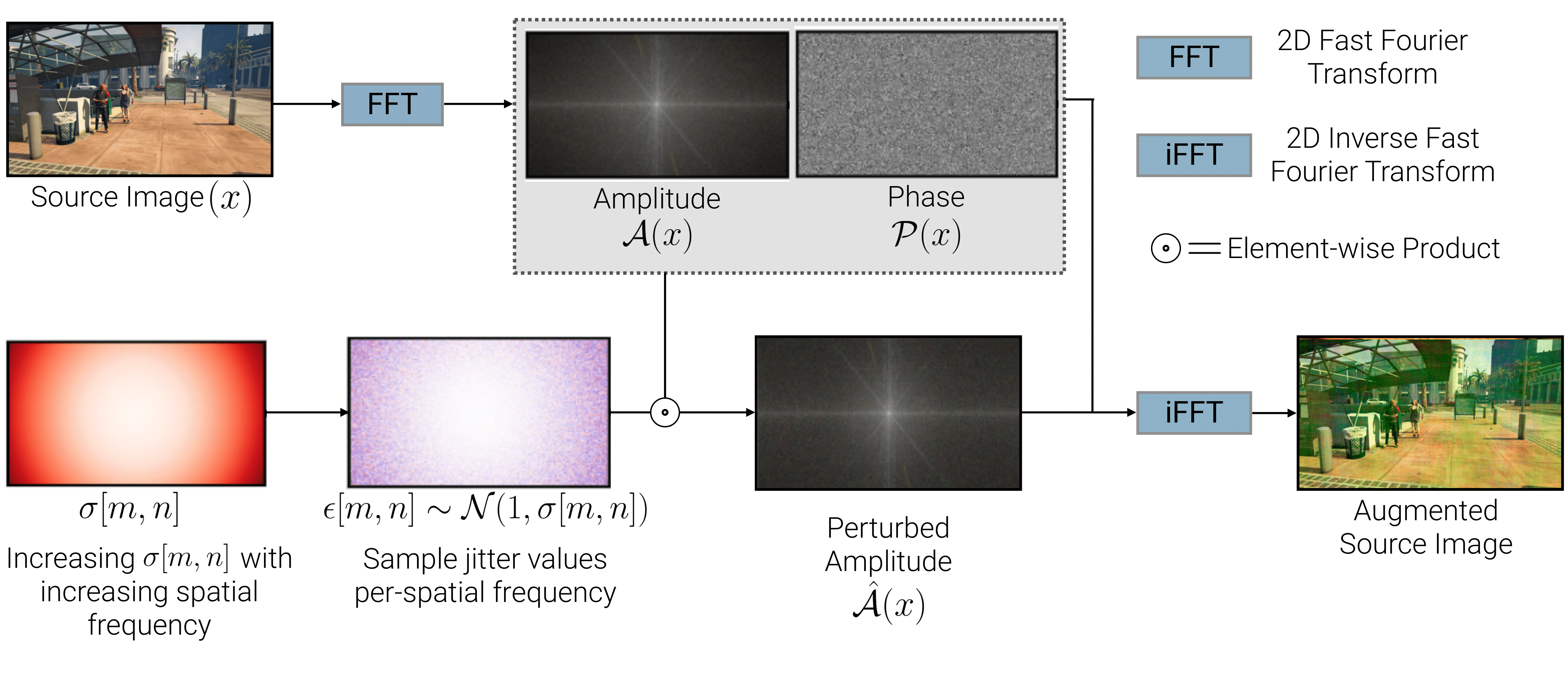

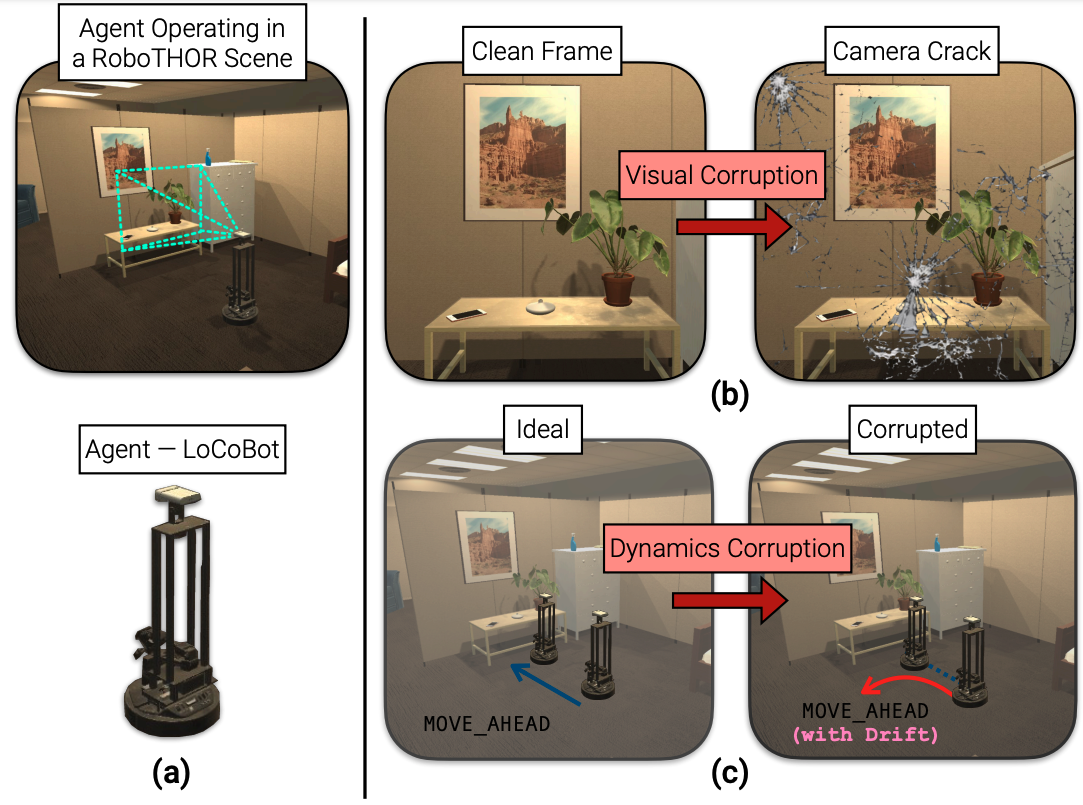

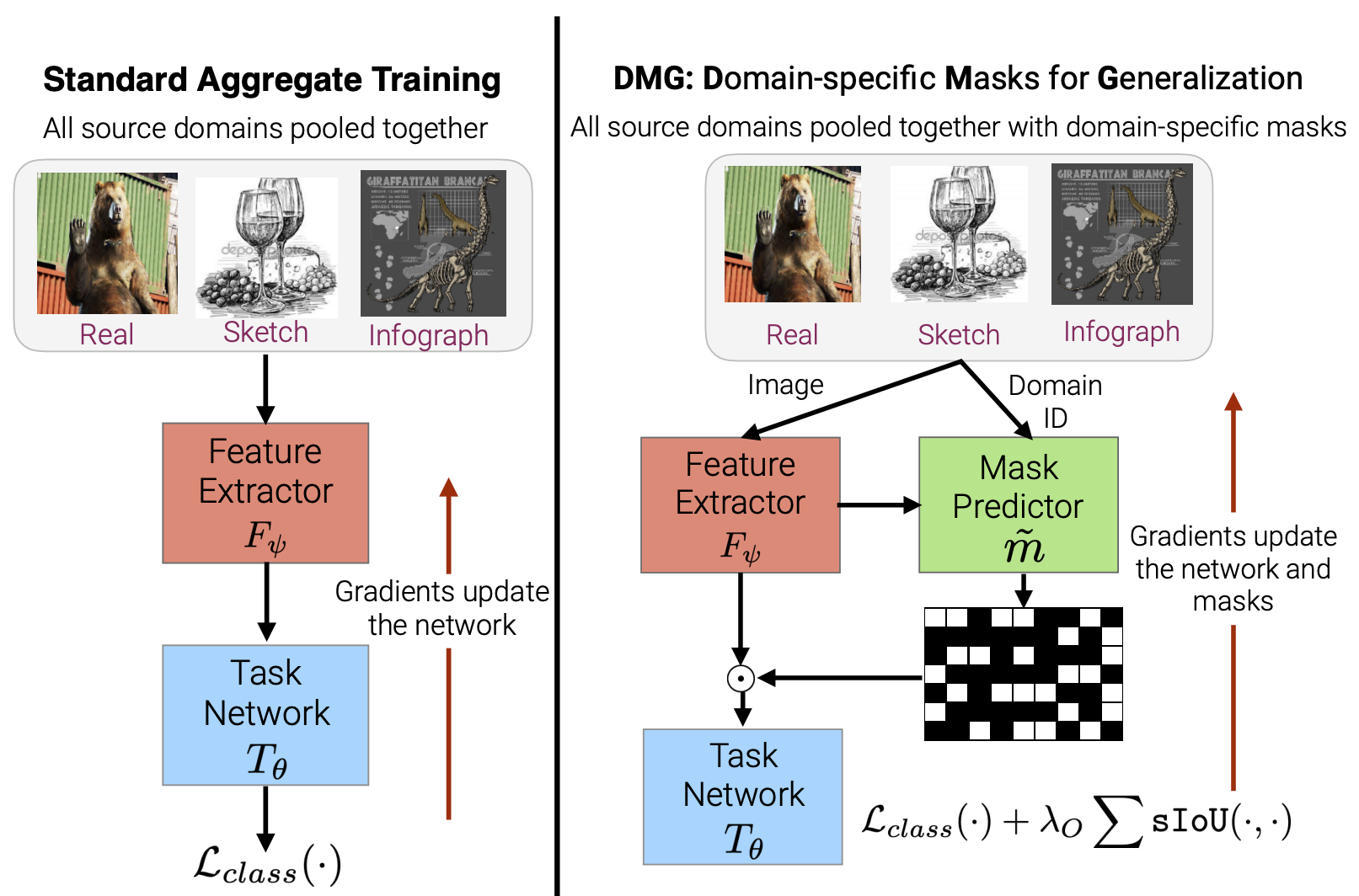

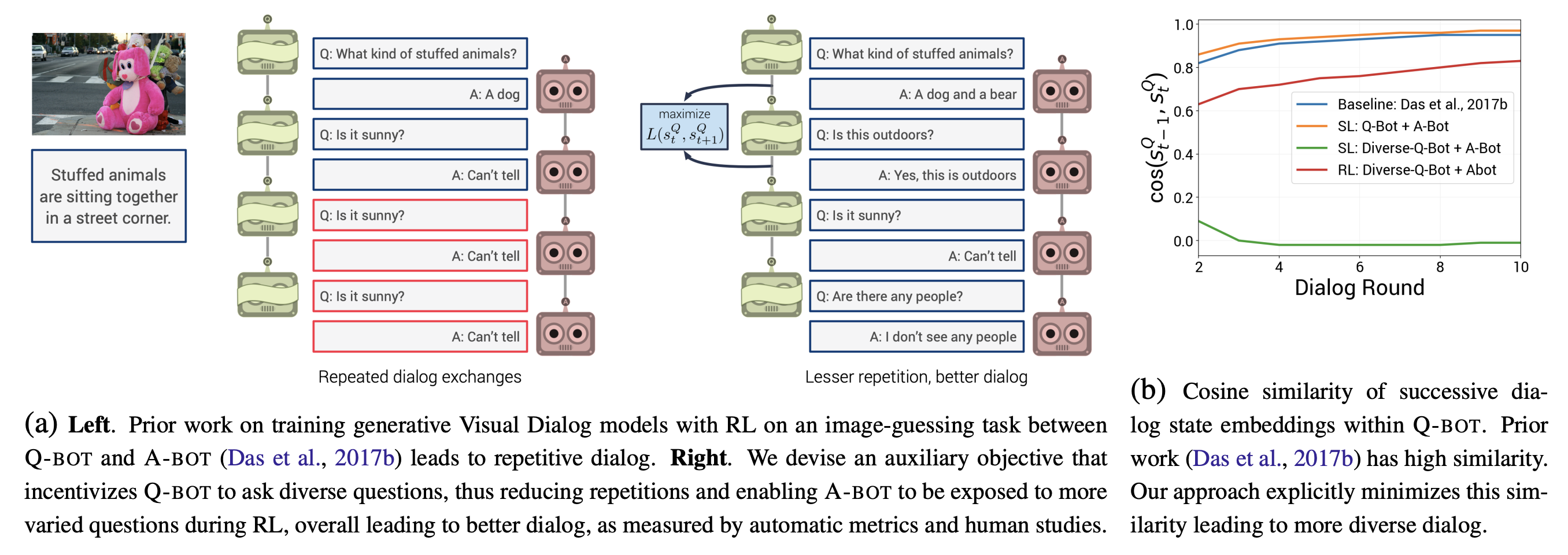

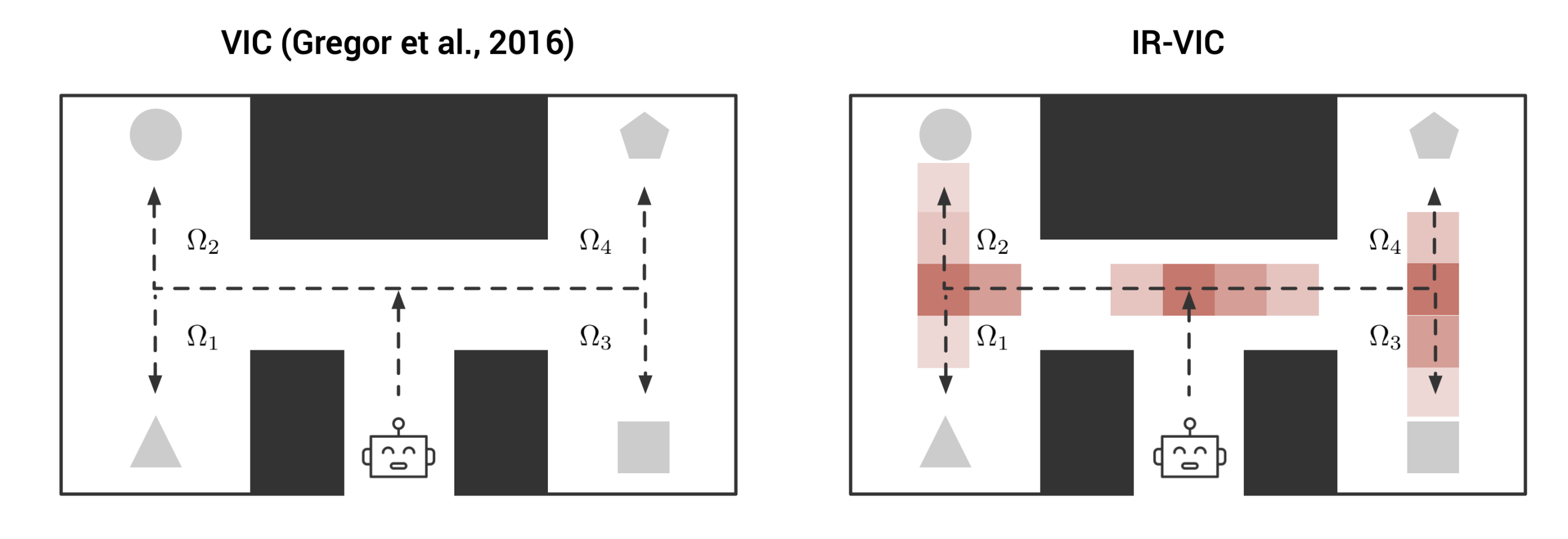

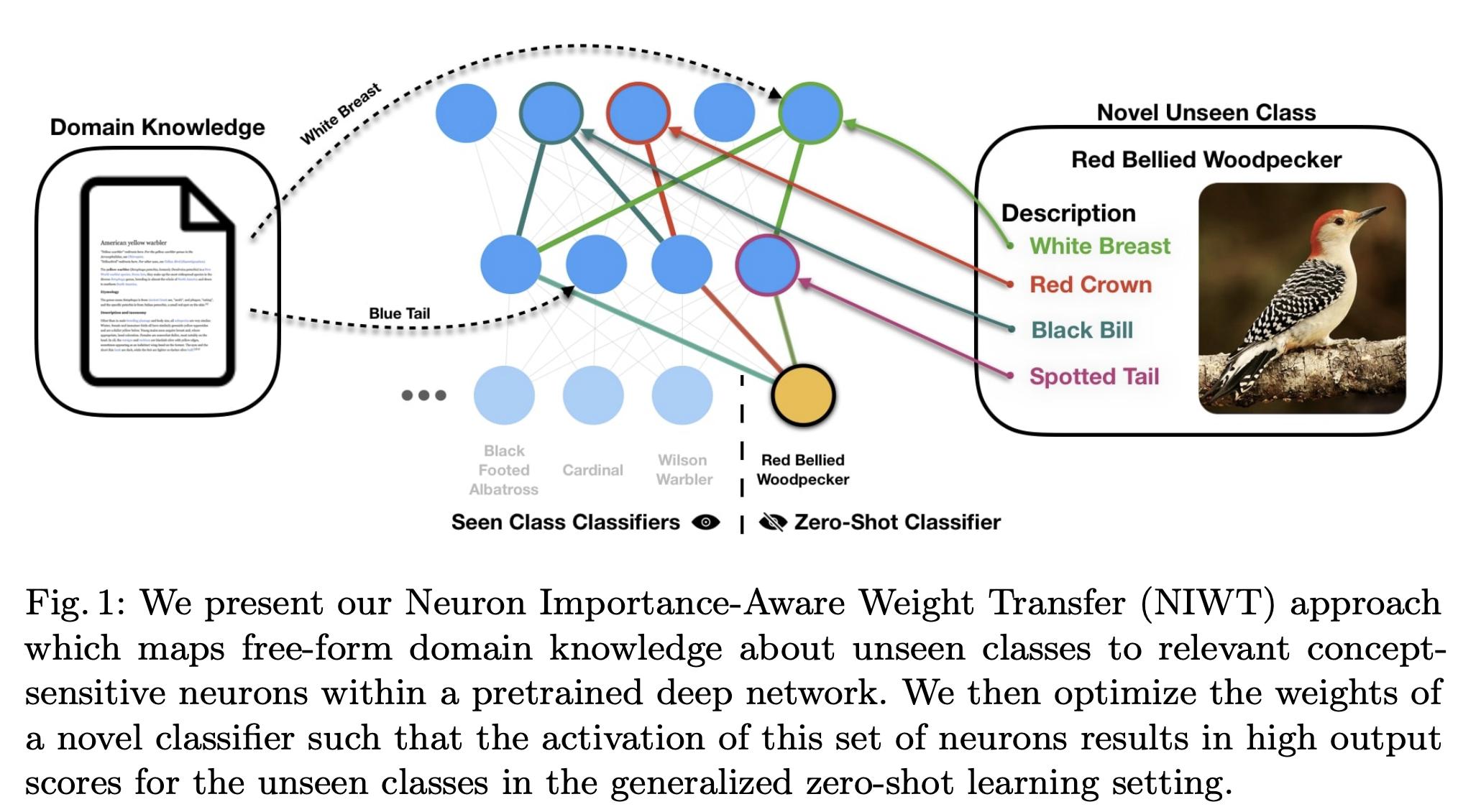

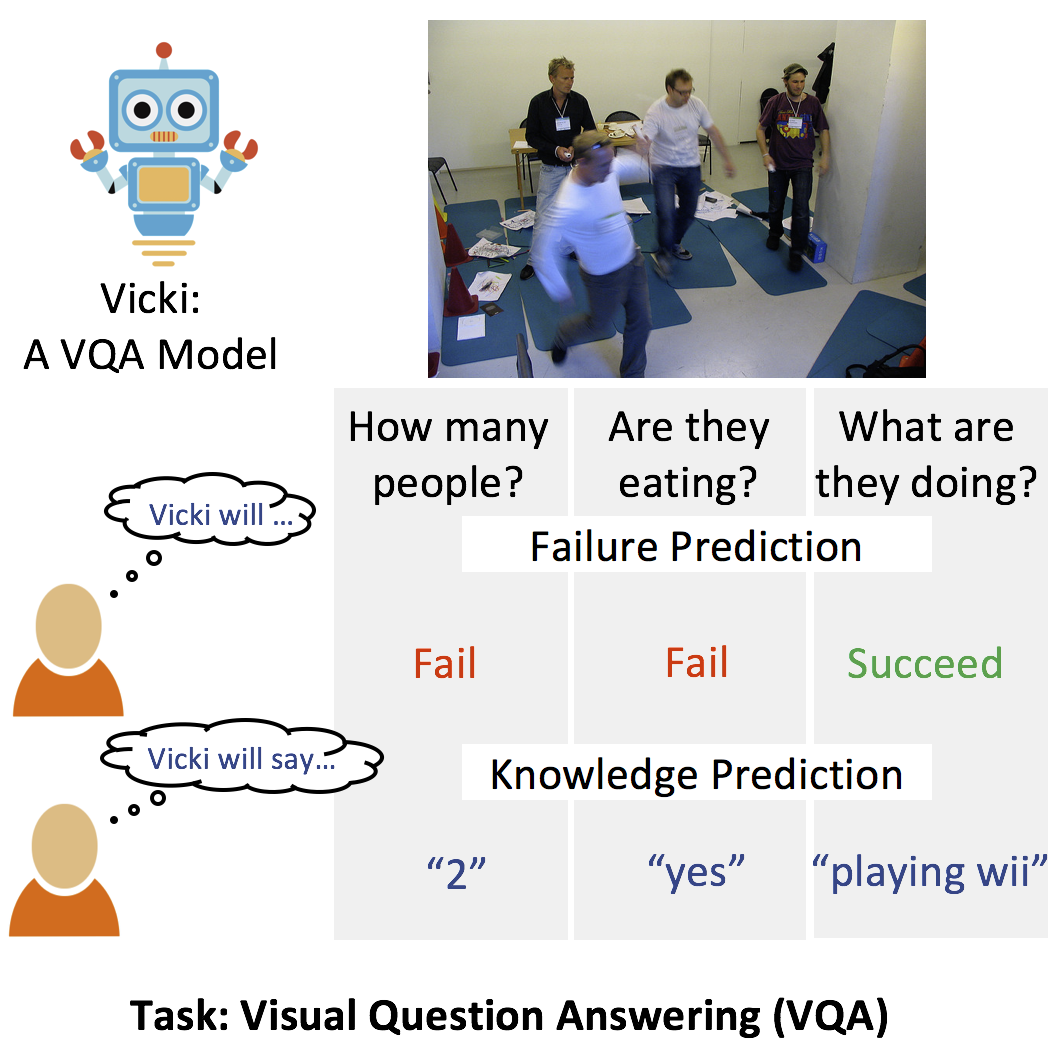

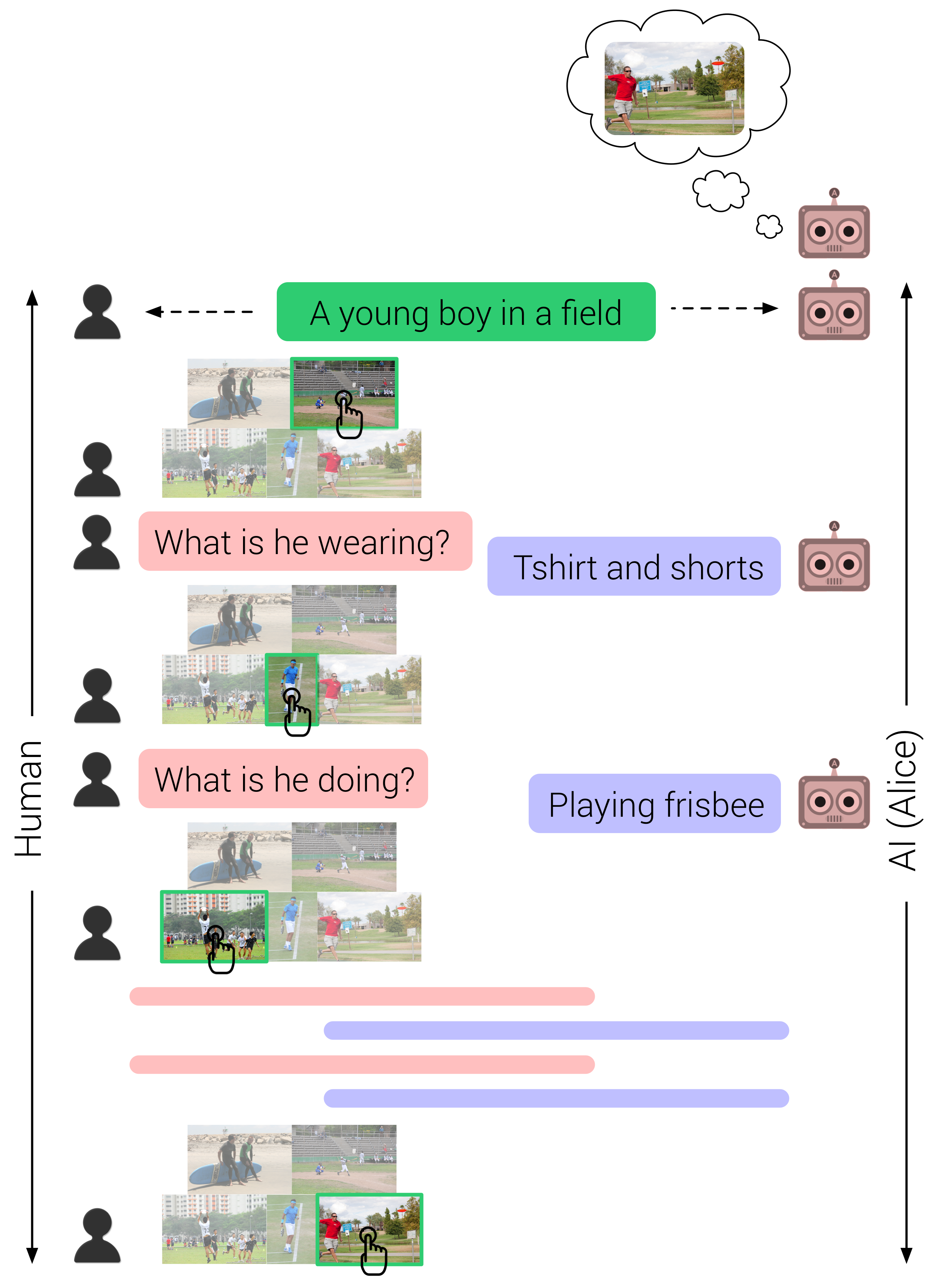

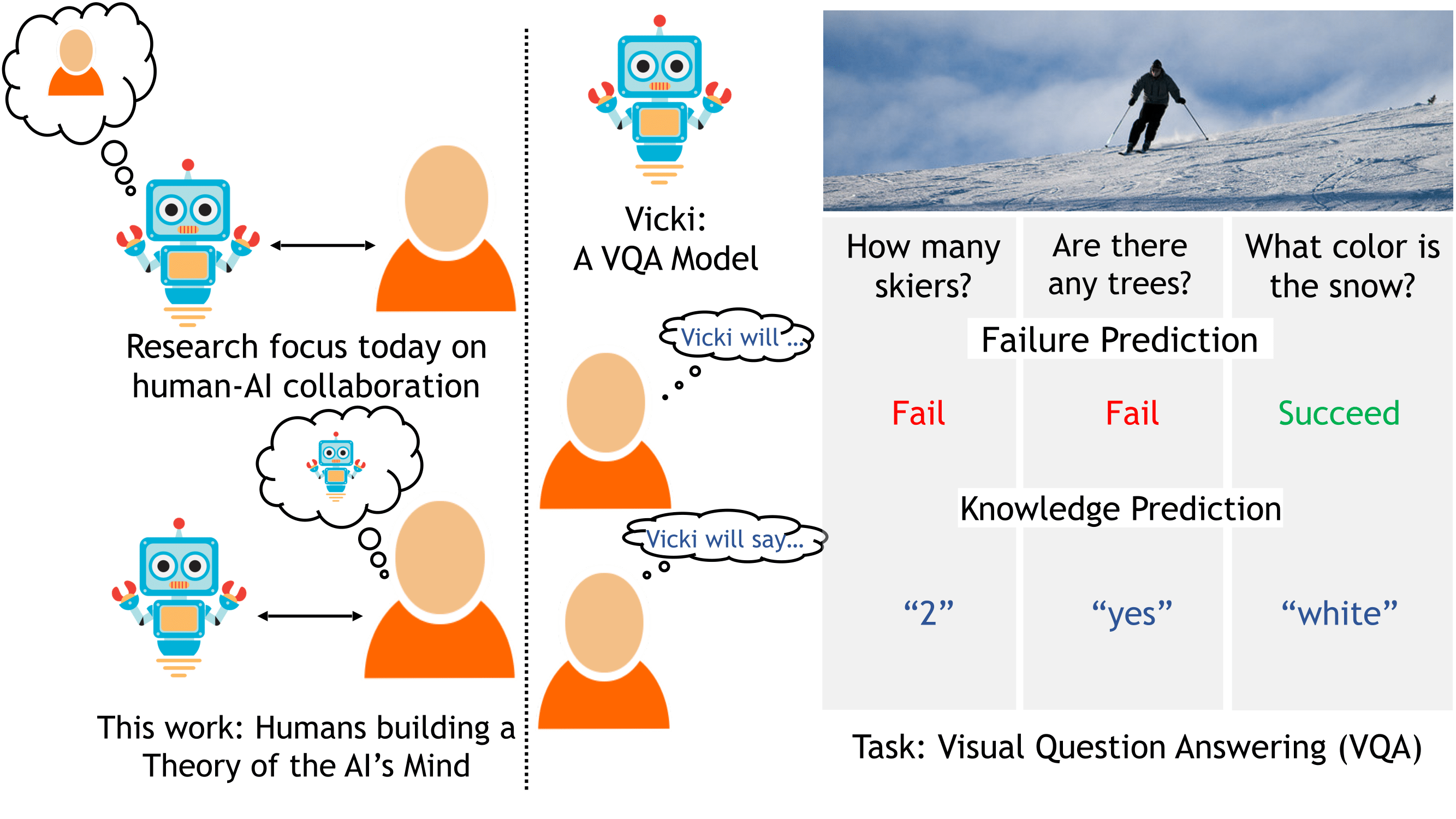

My past work has spanned core computer vision (generalization, robustness, learning from limited supervision or synthetic data), the intersection of computer vision and language, and embodied AI.

I also actively participate in reviewing for top computer vision and machine learning conferences & workshops (have accumulated a few reviewer awards — ICCV 2025, CVPR 2023, CVPR 2022, CVPR 2021, ICLR 2022, MLRC 2021, ICML 2020, NeurIPS 2019, ICLR 2019, NeurIPS 2018 - in the process).

Affiliations

2024–Present

Summer 2020, 2022

Summer 2018

2017-2024

2016-2017

Winter 2014

2012-2016

News

- June 2026Cosmos 3 is out! Our omnimodal world foundation model leads on understanding, reasoning, generation, and action benchmarks. Check it out.

- Oct 2025Joined the panel discussion at the Workshop on Reliable and Interactable World Models (RIWM) at ICCV 2025.

- August 2024Started at NVIDIA as a Research Scientist.

- July 2024Successfully defended my dissertation (slides here, dissertation here)!

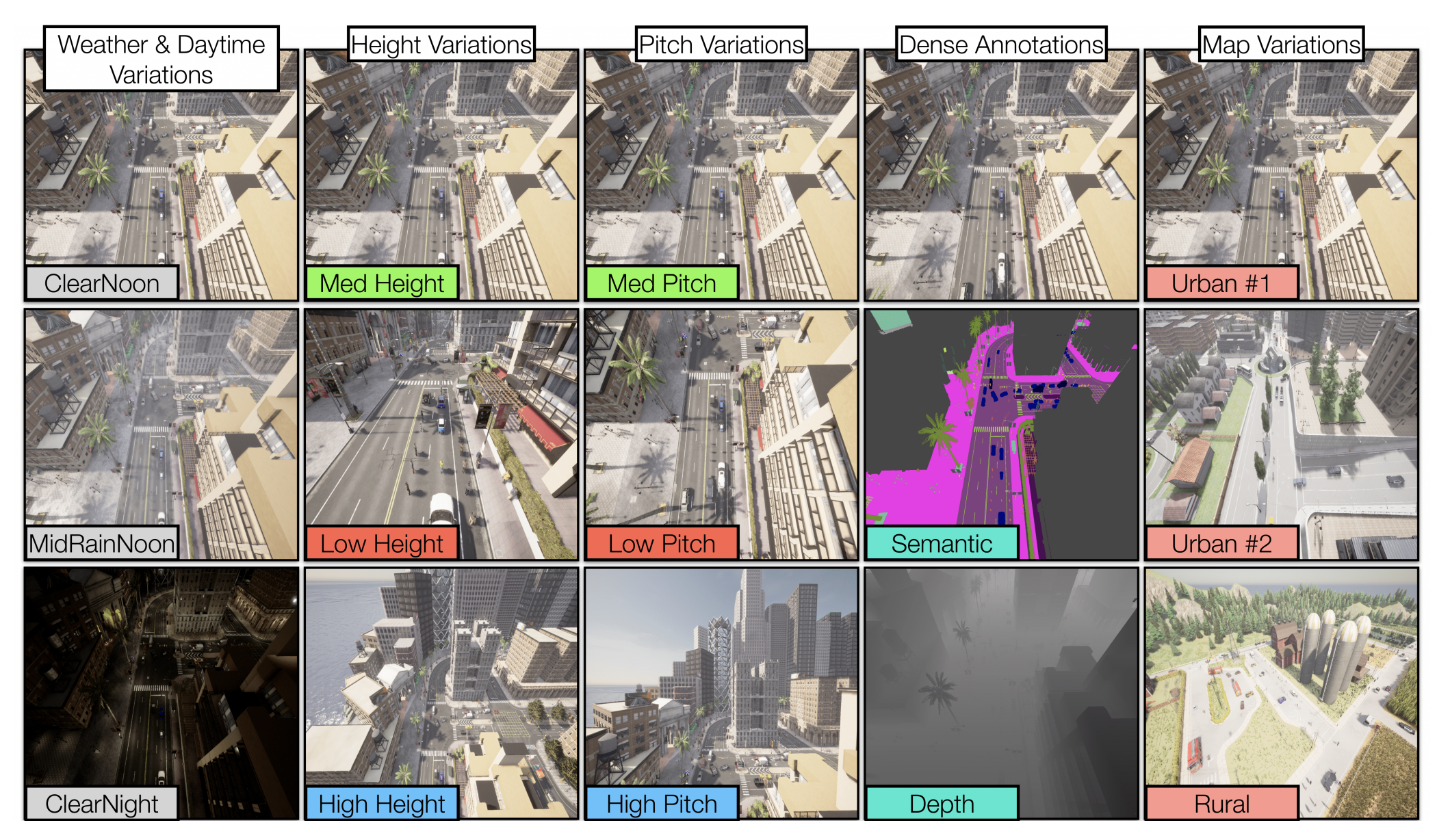

- July 2024SkyScenes accepted to ECCV 2024 (updated preprint coming soon). ...

Research

(* indicates equal contribution)

Achievements

- Recognized as an outstanding reviewer for ICCV 2025!

- Accepted to ICCV 2023 Doctoral Consortium!

- Recognized as an outstanding reviewer for CVPR 2023!

- Recognized as an outstanding reviewer for CVPR 2022!

- Recognized as a highlighted reviewer for ICLR 2022!

- Recognized as an outstanding reviewer for CVPR 2021!

- Recognized as an outstanding reviewer for ML Reproducibility Challenge 2021!

- Recognized to be among the top 33 percent reviewers for ICML 2020!

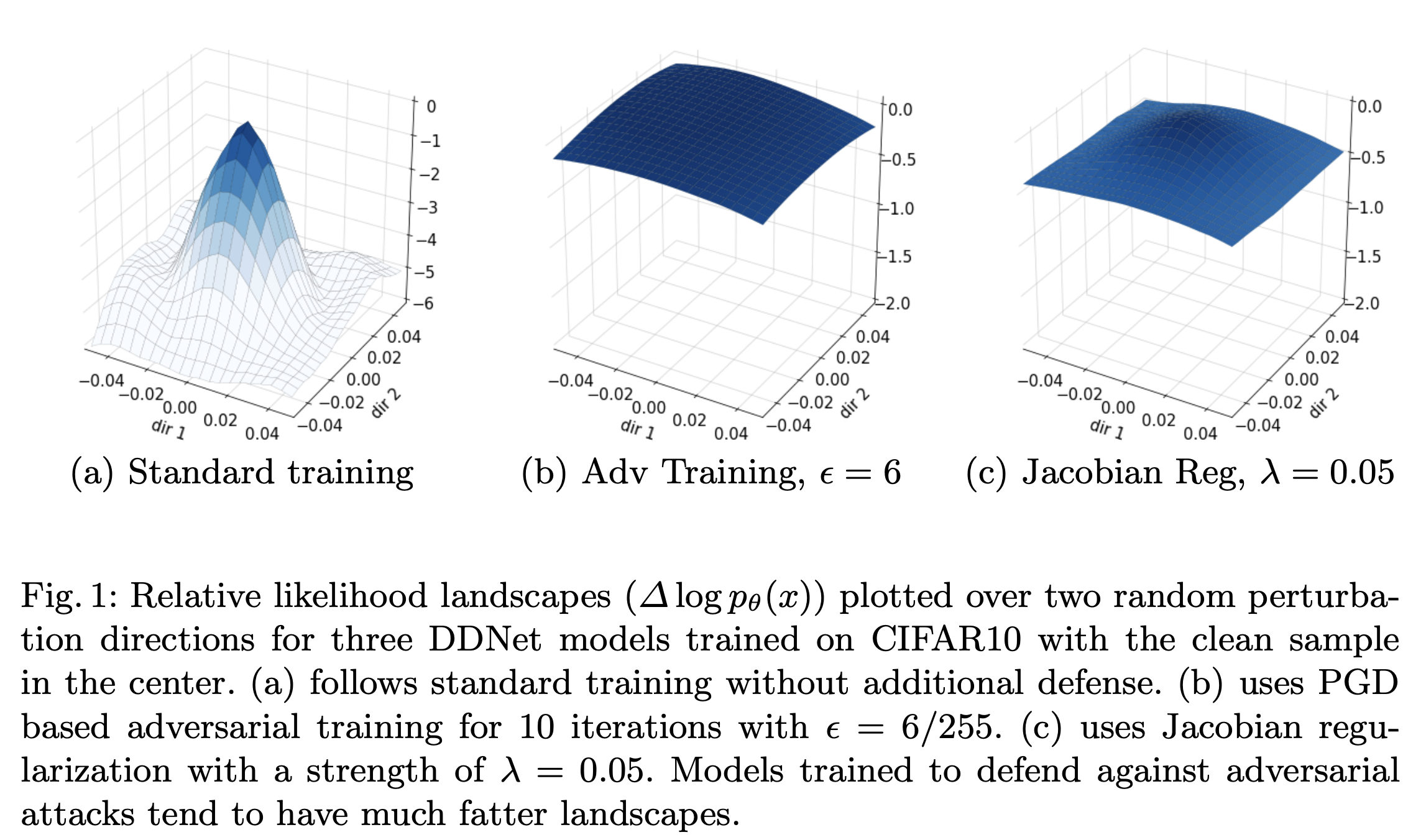

- Likelihood Landscapes received the NVIDIA Best Runner Up paper award at AROW, ECCV 2020!

- Awarded the College of Computing's Rising Star Doctoral Student Research Award (formerly known as the CS7001 Research Award) at Georgia Tech! ...